After spending a week at the HWT, I must say I’m encouraged to see how far the NWP world has come in recent years. For instance, in an effort to keep my mind occupied on my flight to Norman last week, I thought it would be neat to read a paper produced by the SPC on the Super Tornado Outbreak of 1974. If I remember correctly, the old LFM model had a model grid spacing of 190.5 km! After reading this and then coming to the HWT as seeing model output on a scale as low as 1 km is absolutely amazing in my opinion. This is a testament to all the model developers out there who work diligently on a daily basis to produce better models for forecasters in the field. If nothing less, the HWT opportunity made me realize and appreciate the efforts of the model developers more so than I had ever done previously.

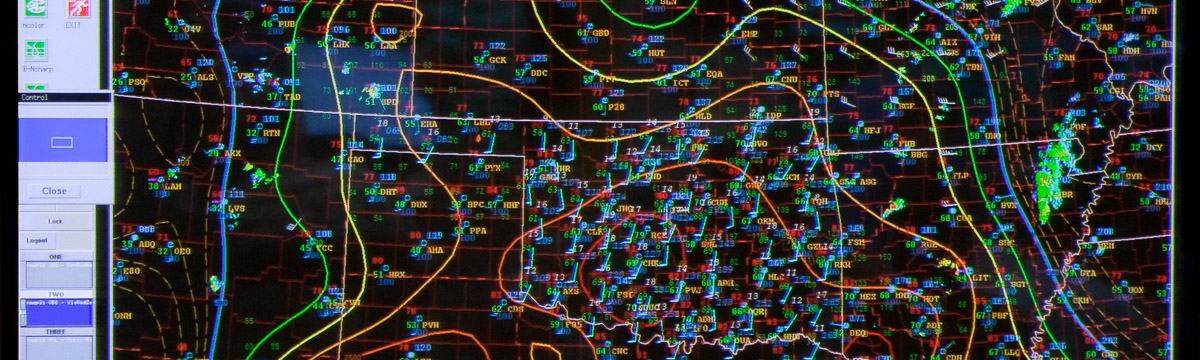

Although these models can provide increased guidance for basic severe wx guidance, such as convective mode and intensity, the models only show output (simulated refl, updraft helicity, etc.) on a very small scale. If taken at face value, critical forecasting decisions can be made without having an adequate handle on the overall synoptic and mesoscale pattern. Thus, even with all the high resolution model output, one must still interrogate the atmosphere utilizing a forecast funnel methodology in an effort to develop a convective mode framework to work from. Sadly, if high resolution model output is taken at face value without any ‘behind the scenes” work beforehand, I can see many blown/missed forecasts as forecasters would be forecasting “blind.” Many factors must be taken into account when developing a convective forecast and unfortunately just looking at the new high res model output will likely lead to more questions than answers. In order to answer these questions, a detailed analysis done prior can allow one to see why a particular model may be producing one thing as opposed to the other. Looking back at some of the old severe wx forecasting handbooks, one thing remains clear, much can be gained on the developing synoptic/mesoscale patterns through pattern recognition. Some of the old bow echo/derecho papers (Johns and Hirt, 1987) and a whole list of others have reiterated the fact that much can be gained by recognizing the overall synoptic pattern. How many times last week were the models producing a bow type signature during the overnight hours? Situations like these commonly need deep vertical shear and unfortunately not much shear was available for organized cold pools when the H50 flow was only 5-10 knots. This is just one instance where having a good conceptual model in the back of your mind can assist in the forecasting process.

As for the models, more often than not, I was pleased by the 4-km AFWA runs. For the activity that developed on the Tue (05/12), the 00/12 UTC AFWA runs had better handle the low-level moisture intrusion up the Palo Duro Canyon just SE of AMA. A supercell resulted which led to several wind/hail reports. A look back at the Practically Perfect Forecast based on updraft helicity the following day had a bullseye centered over the area based on the AFWA output. This is more than likely a testament to different initial conditions as the AFWA utilizes the NASA LIS data. This can pay huge dividends for offices along the TX Caprock where these low-level moisture intrusions have been documented to assist in tornadogenesis across the canyon locations along with a backed wind profile (meso-low formation).

Posted by Chris G.